“Obviously humans drive around with vision, so our neural net is able to process visual input to understand the depth and velocity of objects around us,” Karpathy said. The main argument against the pure computer vision approach is that there is uncertainty on whether neural networks can do range-finding and depth estimation without help from lidar depth maps. “We deleted the radar and are driving on vision alone in these cars,” Karpathy said, adding that the reason is that Tesla’s deep learning system has reached the point where it is a hundred times better than the radar, and now the radar is starting to hold things back and is “starting to contribute noise.” Supervised learning But it has recently started shipping cars without radars. Previously, the company’s cars used a combination of radar and cameras for self-driving. And Tesla is already moving in this direction, Karpathy says. With the general vision system, you will no longer need any complementary gear on your car. “But once you actually get it to work, it’s a general vision system, and can principally be deployed anywhere on earth,” he said. Karpathy acknowledged that vision-based autonomous driving is technically more difficult because it requires neural networks that function incredibly well based on the video feeds only. And it must do all of this without having any predefined information about the roads it is navigating. The self-driving technology must figure out where the lanes are, where the traffic lights are, what is their status, and which ones are relevant to the vehicle. “Everything that happens, happens for the first time, in the car, based on the videos from the eight cameras that surround the car,” Karpathy said. Tesla does not use lidars and high-definition maps in its self-driving stack. “It would be extremely difficult to keep this infrastructure up to date.”

“It’s unscalable to collect, build, and maintain these high-definition lidar maps,” Karpathy said. It is extremely difficult to create a precise mapping of every location the self-driving car will be traveling. “And at test time, you are simply localizing to that map to drive around.” “You have to pre-map the environment with the lidar, and then you have to create a high-definition map, and you have to insert all the lanes and how they connect and all the traffic lights,” Karpathy said.

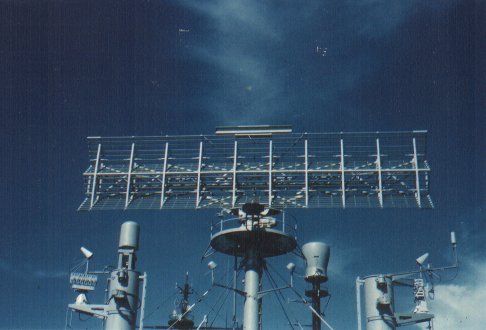

However, adding lidars to the self-driving stack comes with its own complications. Lidars provided added information that can fill the gaps of the neural networks. This is why most self-driving car companies, including Alphabet subsidiary Waymo, use lidars, a device that creates 3D maps of the car’s surrounding by emitting laser beams in all directions. Neural networks analyze on-car camera feeds for roads, signs, cars, obstacles, and people.īut deep learning can also make mistakes in detecting objects in images. Necessary ingredients include: 1M car fleet data engine, strong AI team and a Supercomputer /A3F4i948pDĭeep neural networks are one of the main components of the self-driving technology stack.

Gave a talk at CVPR over the weekend on our recent work at Tesla Autopilot to estimate very accurate depth, velocity, acceleration with neural nets from vision. He also explained why Tesla is in the best position to make vision-based self-driving cars a reality. Speaking at CVPR 2021 Workshop on Autonomous Driving, Karpathy, who has been leading Tesla’s self-driving efforts in the past years, detailed how the company is developing deep learning systems that only need video input to make sense of the car’s surroundings.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed